Warning: this is a long read. We make no apologies about it. What we cover in this post tries to explain in as simple terms as possible what it takes to optimise a web site so that it is lightning fast and scalable to handle very high numbers of visitors. The techniques we use are applicable to most applications our customers usually build their sites on, such as WordPress, Drupal, Joomla and Magento, amongst others. What's more, these techniques enable you to increase speed and capacity without great expense.

This isn't so much of a technical rundown for industry experts, but moreover, it's aimed at web site owners. If you run a web site that is either growing in popularity, likely to need to handle a high surge of traffic very rapidly, or which you simply want running at lightning fast speeds, this post will give you the basic understanding of what is involved.

The Basics

If you run a web site, it's vital to make sure that works as quickly and efficiently as possible from the outset. A great looking site is a start - but if it's slow, you're going to hugely limit it's success and you may run into capacity problems as your visitor numbers increase.

A fast site will:

- Make your site visitors happy. Nobody likes a slow web site. If your web site loads as quickly as possible you're far more likely to engage your visitors, and crucially, generate more revenue.

- Make search engines happy. Search engines love fast web sites, and incorporate speed in their ranking algorithm. The faster your web site loads, the better your ranking will be, the more visitors you stand to get.

- Make your server happy. If your site places a reduced strain on the server, you'll be able to handle many times more visitors without facing spiralling hosting costs.

- Make your wallet happy. See points 1, 2 and 3.

Optimising your web site to be as fast and efficient as possible starts at the source - the very code the web site runs on. Bloated, inefficient code will make the server work so much harder to output the final result. This results in slow page loads, and will mean that you can handle significantly fewer visitors without your hosting costs escalating. That's why it's so important to ensure that your web developer builds your site from the ground up with speed optimisation in mind. We've produced an article on how to optimise your web site for better performance - running through these steps is always the best place to start with improving web site speed.

Once your site is optimised as best you can, if you still want to get things faster, the next step involves upgrading your hosting environment. If you're on shared hosting, the server your site runs on will be servicing hundreds of other web sites at the same time. Our shared servers are incredibly powerful machines which are optimised for this very purpose - but shared hosting will always have a performance hit compared to more premium hosting options.

A hop up to our semi dedicated business web hosting plans will give you a jump in speed and allow you to handle more visitors. The extra cost buys you a slice of a server which has far fewer accounts, faster processors and a larger allocation of the server's processing capacity for your exclusive use.

A jump up to your own virtual or dedicated server will deliver even more speed, and allow you to handle a much larger number of visitors. The server is then only having to process requests for your web site, meaning that its entire resources can be dedicated to serving your site as fast as possible.

But what if you want to go even faster? What if it's impossible to optimise your code any further? What if you need to handle hundreds of thousands of visitors, or handle rapid bursts of traffic that might arrive if you go viral or get featured on television?

It's not the size of the dog in the fight...

One way to continuously improve speed and performance is to add more computing power. Faster servers, more CPU cores, more memory or even adding more servers will improve your speed. You may eventually get the performance you need, but there will almost certainly be a simpler way to get there.

To draw an analogy, you could cross the Atlantic in a rowing boat; it'd be slow, the journey would be treacherous and you may well end up 30 leagues under before you know it. Making the crossing in a larger motorboat will get you there faster, and although it may be choppy, you'll probably make it in one piece. You could continue crossing in bigger and better boats, and with each bigger and better boat you'd get there faster and the journey would be smoother. Most likely, we'd all still be happily crossing the oceans like that today, had the Wright Brothers not found a better way. We didn't need a bigger boat, we needed a plane.

To quote Mark Twain, "it's not the size of the dog in the fight... but the size of the fight in the dog". When you're looking to speed up your web site or handle more traffic, simply adding more computing power is like trying to cross the Atlantic faster by simply using a bigger boat. What you really need is a totally different approach. With intelligent engineering, you can find a completely different way to solve the same problem - you can serve more visitors with a lightning fast web site without needing to spend a penny more than you have to on your server costs. You might even be able to reduce your costs.

There are many ways to achieve this, but in what follows I'm going to focus on three tools we use that work really well with the apps our customers typically use. These three components work together to allow you to serve extremely high volumes of traffic, handle sudden traffic spikes with ease, and make your web site load faster than greased lightning from anywhere in the world. They are Redis, Varnish & CloudFlare RailGun, and best of all, these tools are free. You just need a virtual or dedicated server, and a sprinkling of magic from our server gurus, and these tools work in tandem to optimise delivery of your site.

So let's get stuck in.

Firstly, Redis is like an iceberg. The majority of what Redis can do is vast, and beneath the surface; for most use cases, we only need the tip - data caching. In simple terms, Redis can be used as a very fast way to cache data in memory. One of the pain points that make web sites slow is the time it takes to query a database, often where much of information necessary to display the page is stored. This is particularly true on busy sites that have lots of visitors, and make lots of queries to a database. Redis can be used to cache some or all of your database in memory, and querying data from memory takes a fraction of the time compared to querying from much slower hard disks.

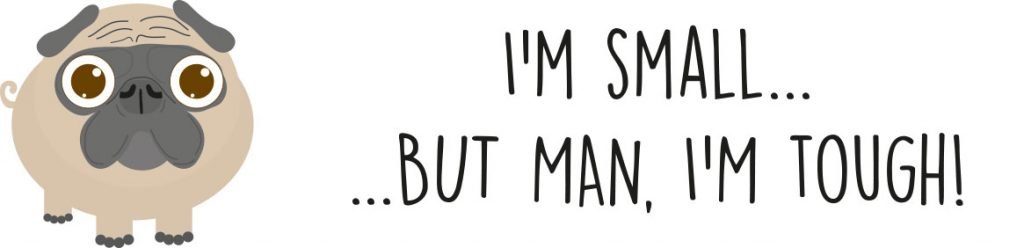

The above graph shows the effect of enabling Redis on a regular WordPress web site. Before Redis is enabled, the time the database takes to respond is many times larger and much more spiky. Once Redis is added, the data queries become much faster and the overall response time of the site improves. What's more, any data set can be stored in Redis, so it's also really useful at speeding up web sites that may rely on external APIs as well. For instance on our own web site, we query various external APIs such as TrustPilot reviews, recent blog posts and Twitter. These are cached directly into Redis so that the data is available instantly, without needing to do a live query to the external API or even build a MySQL table to store that data in.

The good news is that the majority of applications our clients build their web sites using already support Redis natively, or are able to do so through a plugin. Enhancing performance with Redis is therefore incredibly simple - we just need to install Redis, configure it, make a small change in your application code, and you'll experience an immediate speed boost.

Varnish is a web application accelerator, which sits in front of the web server. To understand what Varnish does, it's first important to understand what happens normally, without Varnish. When a visitor accesses your web site, the web server will receive a request for a page, which will then spawn more requests to find all the components needed to display your page. Typically, all your images, CSS files, Javascript, PHP scripts will be requested, PHP code will execute, MySQL queries will be made and data returned, and eventually, the components and resulting HTML will be downloaded to your browser. That's an awful lot of work. When you've got lots of people accessing your web site, your web server is working overtime trying to deliver all of these requests, to all of these visitors. Often, it's doing all of this work to generate the exact same content for different visitors.

With Varnish, all that hard work only needs to be done once, or at least only until the content or page itself changes. Once it's got all the necessary data to display the web page, Varnish caches that data in memory. Now, when the next visitor arrives, your web server can put its feet up and let Varnish simply return the ready made page and all the components in milliseconds, without a single request to disk, MySQL query or PHP execution. This means that your web server only needs to do the hard work when the requested data has changed, freeing it up to handle many times more requests than it would otherwise have been capable.

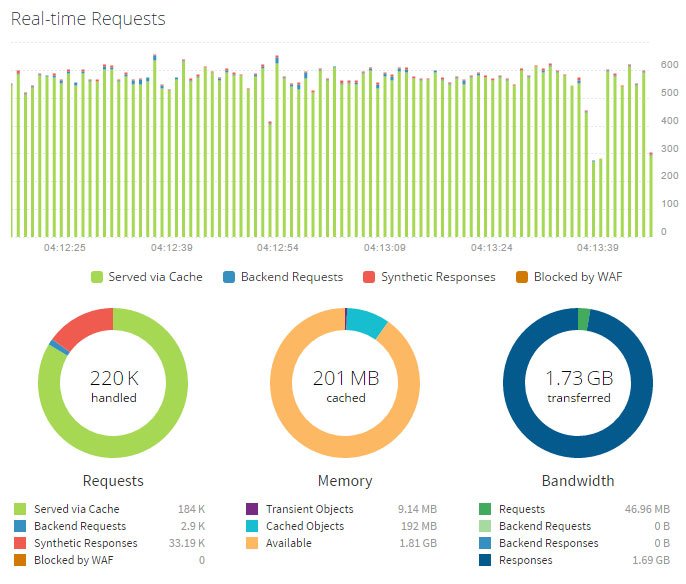

The graph above shows a Varnish monitoring page. The bar graph at the top is a real time overview of traffic, the green represents requests served by Varnish's cache, the red may be a browser redirect or an error message (i.e. a 404 page not found) and the tiny blue portion are the requests that actually have to be processed by the web server. In the above example, only 1.4% of traffic to this particular web site was actually processed by the backend, meaning that your web server had to process 98.6% fewer requests than it would otherwise have had to, and visitors enjoyed super fast page load times.

As with Redis, most common applications our customers use support Varnish. There may, however, be some configuration work needed to fine tune Varnish for your particular use case, and to make sure that it knows when to clear what it's cached.

Varnish is fast, really fast. It typically speeds up delivery by a factor or 300 - 1000 x, and will allow your server to handle huge volumes of traffic. No need to buy a bigger boat, we're now flying a plane.

So both Varnish and Redis are server level caching solutions, software that sits on your server, making things super snappy. By contrast, CloudFlare is a network level caching layer, and a big one at that. Let's say your VPS is in our UK datacentre, but you've got lots of visitors to your web site in the US. Normally when they load your web site, all of the components that make it up will travel across fiberoptic cables under the Atlantic. Now this happens incredibly quickly, but it would be much quicker if that data didn't have to travel all those thousands of miles. Enabling CloudFlare means that your data can be cached in a datacentre that's closer to each of your visitors. The CloudFlare network is vast, with over 100 datacentres spanning every continent on Earth.

By default, CloudFlare will only cache your static data - for instance your images, javascript and CSS - often what forms the bulk of your web site size. It can, with configuration, also cache your web site page content.

There are two major benefits to this network level caching.

Firstly, your web site will load incredibly quickly for your visitors wherever they are in the world. Your site should load pretty much as quickly for someone in Australia, Brazil or Japan as it does for someone in the UK (assuming that's where your Kualo server is).

Secondly, every request that CloudFlare serves, is one less request that your server has to handle. The fewer requests your server handles, the less powerful that server has to be to serve the same amount of visitors. So this means even if you don't have an international audience, CloudFlare will nonetheless take extra strain off your server and free up more resources so you can handle more visitors for the same or less money.

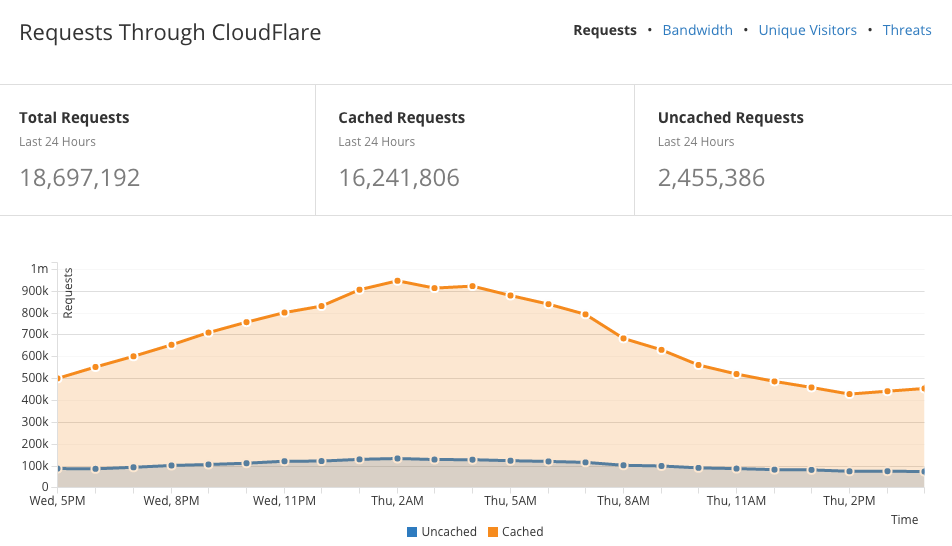

In the example above, the orange portion represents all the requests for a web site that were served from CloudFlare's network, and the blue portion represents requests that were passed to the server. Over 86% of the requests for this site over that 24 hour period came from CloudFlare's network, meaning that your server can be much smaller and cheaper and still allow you to handle all those visitors. If you consider that many of those requests to the server may well have been powered by Varnish, maybe only 1-10% of those requests to the server would have needed to have been processed by the web server itself. That means only a tiny amount of requests needed to run PHP or make a MySQL query - and the ones that do are going to be even faster than normal because of Redis.

The picture gets even better when you consider the bandwidth savings. Any data thats served from CloudFlare saves you bandwidth - you can expect your bandwidth reduction to go down by a similar percentage as your requests, if not even more.

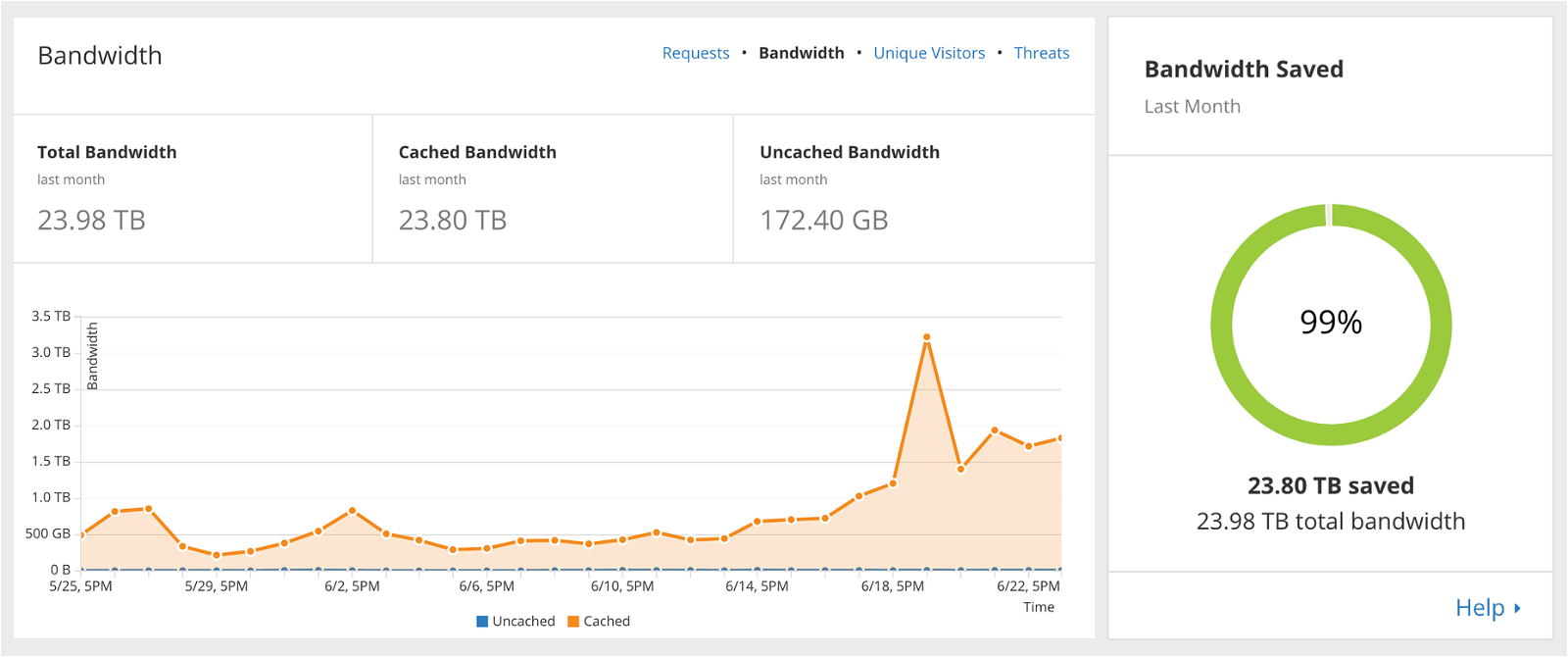

The graph above shows the bandwidth savings of a very busy web site that has been highly cached by CloudFlare. In this particular case, CloudFlare served up 99% of the bandwidth required by the web site, saving the customer over 23TB of bandwidth in a month. At that scale, this represents a huge cost reduction.

Pretty phenomenal, right? We're only just getting started. Enter Railgun.

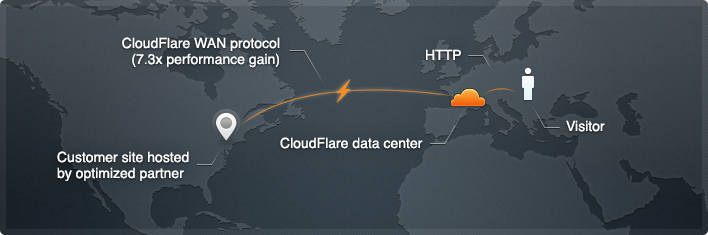

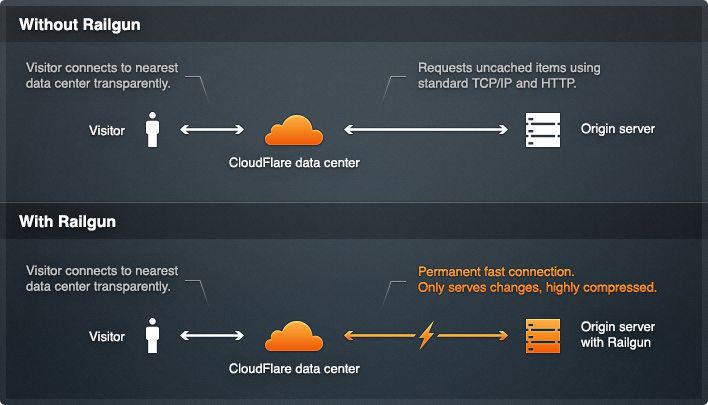

Railgun makes web sites even faster. Kualo is an 'Optimized CloudFlare Partner', which means you don't need to pay the $200/mo fee they usually charge to enable Railgun - you can use it for free. We've installed special 'listener' software on our network which gives us a fast, secure and always-on connection to the CloudFlare network. When CloudFlare requests data from your server, because that data has either changed or didn't already exist in that locations cache, it is transmitted to CloudFlare and onto the end user much faster than it would normally.

What's more, Railgun speeds up re-caching of dynamic content by only transmitting what's changed. Let's say you have an e-commerce web site and you change out a featured product on the home page. Railgun will compare the cached version that it has already stored with the updated version, and will only send the bytes or even bits of data needed to effect that change on the resulting web page. This results in an average 200% additional performance increase.

Again, we're only touching the tip of the iceberg, and there are a myriad of other features and advantages in CloudFlare that can add speed and security benefits. With CloudFlare and Railgun in the mix, we're no longer just flying any old plane - we're on a rocket.

How much faster will my site run?

Just how fast your site will run and how many visitors you can achieve will depend on a number of factors, such as how big your images are, how often your content changes, how many visitors you have and whether your site serves anonymous or logged in users, to name a few.

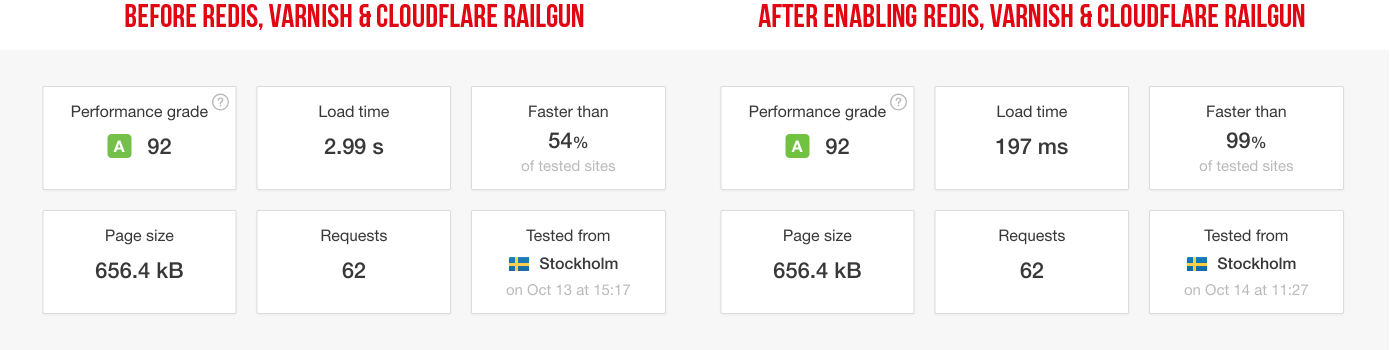

Whilst it's impossible to put a number on it, we can show you some real world examples. The following screenshot shows the results of a Pingdom speed test before and after enabling Redis, Varnish & CloudFlare Railgun.

The test is from the same specification virtual server, the only difference is that Redis, Varnish and CloudFlare Railgun are working their magic. In this instance the site is fairly small in size, and has relatively few requests. Without our optimisations, it took 2.99 seconds to load it from Pingdom's test location in Stockholm, which was the closest test location to our UK datacentre. Once Redis, Varnish and CloudFlare Railgun are configured we were able to achieve a load time that was 1,517% faster.

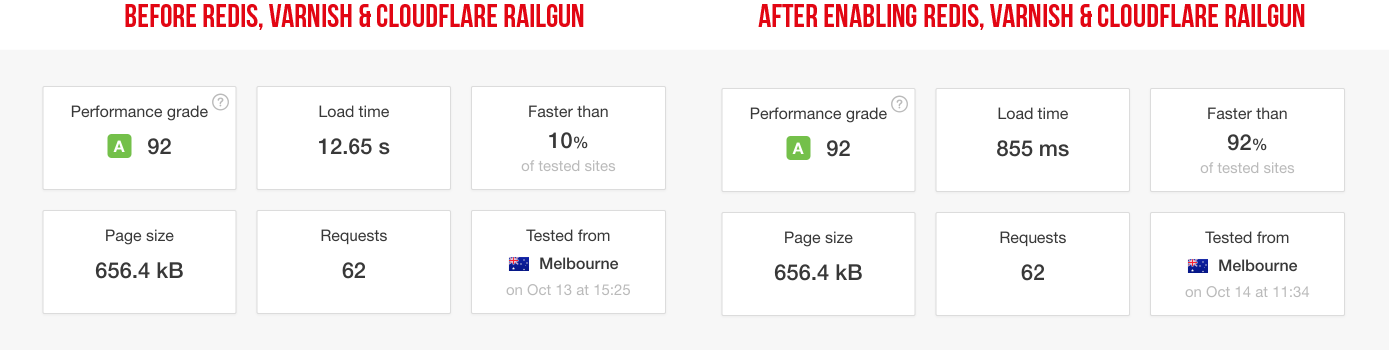

We also ran the same test from Pingdom's Melbourne location. The original time taken to load the site was considerably more as all of that data had to travel over 10,500 miles across many networks to get there. With CloudFlare and Railgun, thanks to the fact much of the site data is already cached locally in Melbourne, this load time is substantially reduced.

After optimisation, the site loads in a still impressive 855ms, 1,480% faster than it would have before. There's a very good chance that this site would have had little to no traction in Australia without this optimisation.

These performance enhancements were achieved with zero extra investment in the server hardware, an can all be applied to a single virtual or dedicated server. If your site continues to grow, we can even take things to the next level with multiple servers, allowing you to not only handle more traffic, but also then eliminate points of failure so your site is also designed for high availability.

Are you ready to supercharge your web site? Do you have a site which is facing capacity issues?

Get in touch with us today and we can start the conversation. We're ready to give your web site the home it deserves.